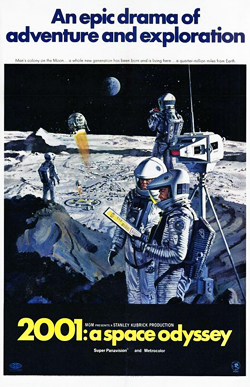

My favorite science fiction movies are the ones that don’t spend two and a half hours yelling and throwing things at my face. This is why I recently watched 2001: A Space Odyssey again. It’s quiet and slow and never boring.

(I assume anyone reading this knows the story: a monolith forcibly evolves a prehuman; millions of years later, the discovery of another monolith prompts a mission to Jupiter; the ship’s computer goes crazy and kills most of the crew; yet another monolith turns the survivor into a magic space baby. Level up!)

The Movie

2001 looks strikingly different from current Hollywood science fiction. At the moment the coolest future Hollywood can imagine is one drained of all colors but dirty gray, dim gunmetal blue, and body-fluid orange. Apparently sometime in the 21st century the visible spectrum will contract Seasonal Affective Disorder. But then, nearly every Hollywood future is either an apocalypse to struggle through or a dystopia for a self-absorbed hero to topple explodily, so I understand why the color graders are depressed. 2001 has its share of beige and sterile white, but, y’know, it’s a cheerful sterile white. And it’s joined by the computer core where David Bowman lobotomizes Hal, lit with the dark red of an internal organ; and Bowman’s mysterious minty-fresh hotel room; and a rack of spacesuits that might have been sponsored by Skittles bite-size candy. 2001’s future might be worth looking forward to–new worlds to explore, new life forms to discover, magic space babies to evolve into. It feels that way partly because the future is pretty. Listen to the score: the spaceships don’t dock, they waltz.

Studios tend to pigeonhole SF as an action genre, and tend to assume too little of action movie audiences. I often bail on these movies for being too loud, too fast, and too dumb. It’s interesting how little 2001 explains, and how little it needs to. 2001 gives just enough information to suggest what’s happening, and trusts the audience to make connections. The movie doesn’t tell us why Hal kills Discovery’s crew. Hal proudly tells an interviewer that the Hal 9000 computer has never made an error. Hal reads Bowman’s and Poole’s lips as they debate shutting him down for repairs following his mistaken damage report. We can work it out for ourselves.1 I’m mildly jarred when, later, Hal explains to Bowman about the lip reading–Bowman didn’t know about it, so it’s not like this dialogue doesn’t make sense, but the audience knows from the way the movie cut between shots of the crew and Hal’s eye. Watching 2001 I get used to not listening to needless explanations. Over half the movie has no dialogue at all.2 It’s the most effective demonstration in sci-fi film of the principle of “show, don’t tell.”

2001 spends a surprising amount of time watching people run through commonplace routines, the kind of action most movies gloss over. The “Dawn of Man” sequence shows how the prehumans live before the monolith shows up because we need to see how they began to understand how they’ve changed. But you might wonder why 2001 shows every detail of Heywood Floyd’s trip to the moon–sleeping on the shuttle, eating astronaut food, going through the lunar equivalent of customs, and calling his daughter on a videophone. When the film moves to the Discovery the plot doesn’t start rolling again until we know David Bowman’s routine, too.

In 1968, science fiction was in the thick of the New Wave, a label given to the younger SF writers writing with more attention to good prose, rounded characters, and just generally the kinds of ordinary literary qualities that make fiction readable. These were never entirely absent from science fiction, but the “golden age” of the genre was dominated by a functional, didactic style exemplified by the work of Isaac Asimov. Some fans call science fiction the “literature of ideas.”3 In golden age SF, the ideas were king and everything else existed only to serve them. The characters were mouthpieces for the ideas. The prose was kept utilitarian–“transparent” was the usual term–to transmit the ideas with minimal friction. Writers used less of the implicit worldbuilding that dominates modern SF, relying on straightforward exposition to describe the world and particularly the scientific gimmicks they’d built their stories around. Stories often described in intricate detail actions that, to the characters, were routine.

Translate these expository passages to film and you have the scenes of Heywood Floyd taking a shuttle to the moon. This is not inherently bad. I’d argue that it takes more talent and effort to write a good book in this grain than it does to write traditional fiction, but a witty or eloquent writer can do as he or she pleases.4 So can filmmakers as proficient as Stanley Kubrick and Douglas Trumbull, 2001’s effects supervisor. I can see why some viewers lose patience with 2001, but I personally am not bored.

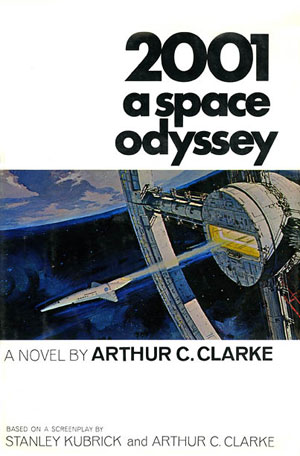

The Book

Of course, 2001 does exist in prose. 2001 was a collaboration between Stanley Kubrick and Arthur C. Clarke; while Kubrick wrote the script, Clarke wrote the novel. The movie was so far ahead of its time that, even with its 1968-era design work, it still looks fresh. So when I followed up this viewing of the movie with Clarke’s novel, which I hadn’t read in so long that I’d forgotten it completely, I was surprised it was so old-fashioned. In retrospect, I shouldn’t have been: 2001 the movie’s virtuoso style is built from an old-fashioned plan. I just hadn’t noticed until I reread the novel, which seems unaware the New Wave ever happened. Clarke’s prose lacks the style of Kubrick’s direction, and without actors to give them life the characters are revealed as perfunctory sketches, functions rather than subjects. They demonstrate aspects of the universe, and witness its wonders, but the universe itself is what matters.

It’s striking how much space Clarke devotes not to telling us what someone is doing now, but what they would do, could do, were doing, or had been doing. Sometimes 2001 reads like the kind of nonfiction book you’d give to children to teach them how grown-up jobs work. (“At midday, he would retire to the galley and leave the ship to Hal while he prepared his lunch. Even here he was still fully in touch with events, for the tiny lounge-cum-dining room contained a duplicate of the Situation Display Panel, and Hal could call him at a moment’s notice.”) Clarke’s purpose is not only, and maybe not even primarily, to tell a story. He wants to make the audience understand Heywood Floyd and Dave Bowman’s entire universe. Clarke’s 2001 is the opposite of Kubrick’s. The movie is gnomic, open to multiple interpretations. The book wants to tell us something, and it’s going to make it absolutely clear.

Unfortunately Clarke, while not really bad, doesn’t quite have the writing chops to deliver compelling exposition–or at least he wasn’t exercising them here. Still, the book occasionally improves on the movie, mostly by expanding on it. 2001 is over two hours long, but the book has more space.5 The movie’s “Dawn of Man” segment keeps its distance from its subjects. We don’t get to know any of the prehumans. We can’t really even tell them apart. The novel can get inside the mind of the prehuman Moon-Watcher. After Hal, he’s the most vivid character in the book. (On the other hand, it says something about 2001 that the most vivid characters are an ape-man and a paranoid computer.)

In both film and book, the climax of the “Dawn of Man” comes when Moon-Watcher intuits the concept that makes him, in Clarke’s words, “master of the world”: the tool. Specifically, a weapon–Moon-Watcher needs to hunt and to defend himself from leopards and rival bands of prehumans.

The movie then makes its famous jump cut from bone to satellite. The book tells us something the movie doesn’t: the satellite is part of an orbital nuclear arsenal. If the unease running under the surface of the novel’s middle section seems muted now, it’s because it needed no emphasis in 1968: it went without saying that humanity might soon bomb itself into extinction.

When Bowman returns to Earth as the Starchild, his first act is to destroy the nuclear satellites–and then the book repeats the “master of the world” line. If humanity made its first evolutionary leap when it picked up weapons, says 2001, its next great leap won’t come until it learns to put them down again. Apparently this theme appeared in an earlier draft of the movie’s script but didn’t make it into the final film. It doesn’t feel like the movie is missing anything–it is, after all, already pretty full–but the novel is better for the symmetry.

Evolviness

The theme shared by both novel and movie is evolution–and here we come to the original reason I started this essay: evolution in science fiction is weird. Several crazy evolutionary oddities crop up over and over in SF, and 2001 makes room for them all.

People who don’t know much biology often think evolution tries to build every species into its Platonically perfect ideal form. In this view, humans aren’t just more complex or more self-aware than the first tetrapod to crawl out of the ocean: we’re more “evolved.” This is as pernicious as it is foolish–in the early 20th century, true believers in evolutionary “progress” used the idea to justify eugenics and “scientific” racism.

Despite that, in many science fiction stories evolution has a direction. This direction is usually entirely unlike the direction the eugenicists were thinking of. Not usually enough, mind you–a few of these “perfect” humanoids look disturbingly blonde–but science fiction people mostly evolve into David McCallum in the Outer Limits episode “The Sixth Finger”–people with big throbbing brains and, more importantly, godlike powers. (Also, at least on TV, they tend to glow.) Depending on the story, this might be a metaphor for either social and technological progress (if the more highly evolved beings are wise and authoritative, like Star Trek’s Organians), or absolute power corrupting absolutely (if they’re assholes like Star Trek’s Gary Mitchell). The crew of the Enterprise met guys like this in every other episode of Star Trek–Gene Roddenberry’s universe has more alien gods than H. P. Lovecraft’s. In media SF huge heads are optional; powers aren’t. Especially in comic book sci-fi–think of the X-Men, the spiritual descendants of A. E. van Vogt’s Slan. Sometimes people evolve into “energy” or “pure thought”–or, in modern stories, minds uploaded as software. In Clarke’s own Childhood’s End the human race joins a noncorporeal telepathic hive-mind. In 2001, the Starchild destroys the Earth’s orbiting nukes with a thought. In science fiction, sufficiently evolved biology is indistinguishable from magic.

The suddenness of David Bowman’s transformation brings up another point: in science fiction, evolution happens very fast, not in gradual steps but in leaps. It works like the most extreme form of punctuated equilibrium you’ve ever seen–a species coasts for a few million years in placid stability, until bam: a superbaby is born! With three eyes and an extra liver and telepathy! In biology, this is known as saltation, or more colloquially as the “Hopeful Monsters” hypothesis. Nobody takes it seriously… except in science fiction, where you actually can make an evolutionary leap in a single generation. This is the premise of Childhood’s End, and The Uncanny X-Men, and Slan. Theodore Sturgeon used it in More Than Human. Sometimes this is a metaphor for the way an older generation struggles to understand its children. More often it’s simply artistic license. Evolution takes millions of years, but fiction, unless it’s as untraditionally structured as Last and First Men, deals with individual human lives. To talk about evolution, SF writers collapse its time scale to match the scale they have to work with.

Some SF, particularly in TV and film, twists saltation even further away from standard evolutionary theory: evolutionary leaps don’t just happen between generations. People can evolve–or devolve–in midlife, as David Bowman is evolved by the monolith. In the world of Philip K. Dick’s The Three Stigmata of Palmer Eldritch, for instance, you can go in for “evolutionary therapy” and come out with a Big Head. In my plot summary I used the words “level up” ironically, but it really is like these writers are powering up their Dungeons and Dragons characters–suddenly, the hero knows more spells. Written SF usually tells these stories with some kind of not-exactly-evolutionary equivalent–in Poul Anderson’s Brain Wave all life on Earth becomes more intelligent when the solar system drifts out of an energy-dampening field; the protagonists of Anderson’s “Call Me Joe” and Clifford Simak’s “Desertion” trade their human bodies for “better” bodies built to survive on alien worlds. TV shows just go ahead and let their characters “mutate.” Countless episodes of Star Trek and Doctor Who are built around this premise, the most awe-inspiring being Star Trek: Voyager’s legendarily awful “Threshold”, in which flying a shuttle too fast causes Paris and Janeway to evolve into mudskippers.6 This is, again, artistic license: stories focus on individuals, not species. The easiest way to write a story about biological change is through metaphor, by putting an individual character through an impossible evolutionary leap.

Most of the leaps I’ve cited in the last three paragraphs have something in common: they don’t involve natural selection. In science fiction, evolutionary leaps are triggered by outside forces. Sometimes an evolutionary leap is catalyzed by a natural phenomenon, like the “galactic barrier” encountered by Gary Mitchell on Star Trek. The latest trend in evolutionary catalysts is technological. Vernor Vinge has proposed that humanity is heading for a “Singularity”, when exponentially accelerating technological breakthroughs lead to superintelligence, mind uploads, immortality, and just generally a future our puny meatspace brains cannot predict or comprehend. The Singularity is the hard SF equivalent of ascension to Being of Pure Thoughtdom, leading to the less kind term “the Rapture of the nerds.” Plausible or not,7 the occasional singularitized civilization is de rigeur in modern space opera (not always under that name; for instance, lurking in the background of Iain Banks’s Culture universe are civilizations who’ve “sublimed”). Short of the Singularity, a good chunk of contemporary far-future SF involves transhumans or posthumans, people who’ve enhanced their bodies and minds technologically, A pioneering novel in this vein was Frederick Pohl’s Man Plus, about a man whose body is rebuilt to survive on Mars.

Singularities and transhumanism put humans in charge of our own evolution. 2001 puts human evolution in the hands of aliens, as do many other stories, including Childhood’s End. Octavia Butler’s Dawn and its sequels deal with humanity’s assimilation into a species of gene-trading, colonialist aliens. Both books are about humanity’s future evolution, but just as often the aliens have guided us from the beginning of human history, as in 2001–the idea even took hold outside of fiction, in Erich von Däniken’s crackpot tome Chariots of the Gods?. Star Trek explained the similarity of its mostly-humanoid species with an ancient race of aliens who interfered with evolution on many planets, including Earth. Nigel Kneale’s Quatermass and the Pit is a cynical take on the same concept.

So. Having (at tedious length) established that science fiction tends to get evolution (usually deliberately) wrong, what does it mean? Specifically, what does it mean for the ostensible subject of this essay, 2001?

Tales of weird evolution rarely depict change as evil.8 More often they’re about human potential. Evolution is more often a metaphor for progress and growth, personal or social: the Organians are “more evolved” than us not because they can turn into talking lightbulbs but because they possess more knowledge and wisdom. Stories of evolutionary leaps are about the hope that we can become more than we are, the growing pains we suffer in transition, and occasionally the fear that we might not be able to handle our new knowledge and abilities.

It gives me pause, though, that in science fiction growth is so often represented by a kind of evolution that doesn’t exist. As I’ve mentioned, there are narrative reasons for these oddities. An epiphany is more dramatic, and more suited to a story taking place in a limited time-frame, than a geologically slow ongoing process of becoming. And an epiphany can’t come out of nowhere–it needs a specific cause, one more narratively satisfying than the laws of biology. But what we end up with are stories of personal and social progress in which we don’t grow ourselves–we’re grown by outside forces. Our growth as human beings is an emergent property of accelerating technological change, or it’s granted to us by gods and monoliths. In 2001: A Space Odyssey Moon-Watcher doesn’t discover tools himself–the monolith implants the concept in his mind. The human race has to prove it’s worthy of the next step in evolution by traveling to the moon and then to Jupiter, but when David Bowman arrives the secrets of the universe are given to him.

The evolution metaphor in 2001–and in science fiction in general–is a weird, confused, disquieting tangle of optimism, hope, and cynicism. Humanity has the potential to be more than we are, but not by our own effort and not through any process we can control or understand. It’s like science fiction thinks we can’t get from here to wisdom without a miracle in between.

-

The book explicitly explains Hal’s behavior and its explanation is different from the one we’re led to believe in the movie. ↩

-

The trivia section of 2001’s IMDB page gives the dialogue-free time as 88 minutes out of 141. ↩

-

I am not one of them. The description is uselessly vague. What book isn’t about ideas? ↩

-

This is why I bought Mark Twain’s Autobiography, on the face of it a thousand pages of random Grandpa Simpsonesque rambling: Mark Twain’s grocery lists are worth reading. ↩

-

Sorry. ↩

-

As a capper, they proceed to have baby mudskippers together. ↩

-

I’m on the “not” side, myself. ↩

-

When they are, they’re usually horror stories. Often they focus on a mad scientist who’s devolving people, or evolving animals, e.g. The Island of Dr. Moreau. ↩